Project Examples

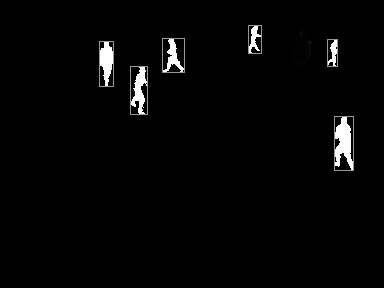

COUNTING THE CROWD AT AALBORG CARNIVAL

Crowd counting provides useful insights into a given event for promoters, sponsors, and attendees. But often reliably numbers are hard to obtain using the conventional methods. From a computer vision point of view,a carnival presents a lot of challenges due to its scale, the crowd density, and the fact that many of the participants are dressed up in costumes making it difficult to detect people. Using depth data captured from a zenithal viewpoint at one point on the parade, we extract information about the participants, track them through the scene, and finally count each participant passing by the developed computer vision system

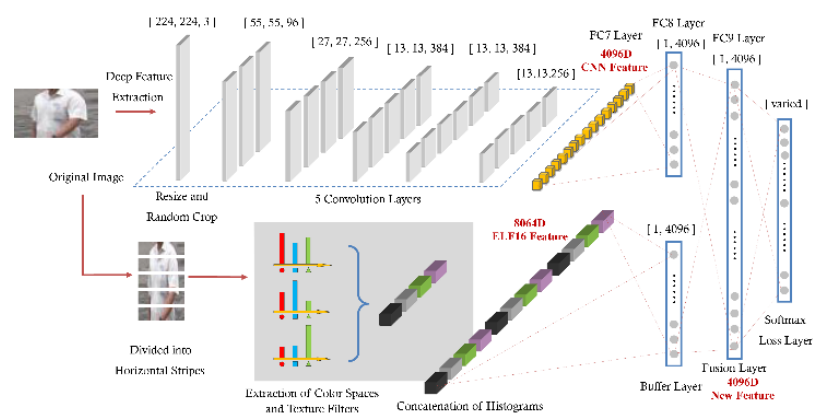

Re-identification using Deep Learning

Person re-identification is a problem of matching persons across several camera views. The aim of this thesis was to develop a system which combines the outputs from different systems that complement each other. This include a system which extracts high-level information i.e. features from state-of-the-art Deep Learning, a system extracting mid-level features using dictionary learning and a system extracting low-level color and texture features from small image patches. The outputs are combined using two different fusing schemes. Compared to previous state-of-the-art systems, the proposed system improves the re-identification accuracy by up to 15%. Video

Automatic analysis of sports facilities

Using a thermal camera to monitor sports arenas, this project examined how many people that where using the arena and where they were positioned on the court. The goal is to optimise the utilisation of existing arenas by delivering documentation of current use. The developed system requires only a simple initialisation after which it runs automatically. Data are represented as weekly hour tables or as day graphs showing the arena’s utilisation during the day. The system has been used in a pilot project running over 3 months analysing 10 sports arenas in Aalborg municipality with good results. The project has been granted funding for further development. For more info see http://www.create.aau.dk/bbh/

The Automatic PoolTrainer

This project examined how multimodal user interaction can be used to create a pool trainer. It consists of a pool table, cues and balls, etc. In the ceiling above the pool table a projector and a camera is mounted. The projector will draw shapes (e.g. menus, lines and circles etc) directly on the surface of the table, and the camera captures the positions and movements of the balls. The system is addressed either by pressing buttons on a “virtual menu” projected on the pool table, or by addressing an agent “James” by voice. The project has generated multiple scientific articles and student projects, as well as getting noted in both local and international media.

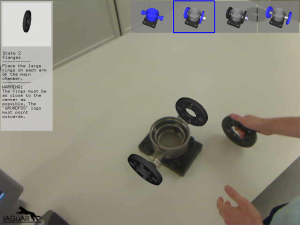

IAGUAR

IAGUAR (Interactive Assembly Guide using Augmented Reality) proposed using Augmented reality to assist assembly of complex objects, as the pumps the project focused on. The system uses a display to show the user the augmented reality, which consists mainly of video input of a camera positioned near the user’s head. In the videostream the object is found, and using the object’s position and orientation, the next step in the assembly proess is shown using 3D graphics overlayed the video stream from the camera. Using this setup it was found that even people with no prior experience was able to assemble a pump succesfully.

Modeling Falling and Accumulating Snow

This project sought to mimic falling and accumulating snow in a realistic manner. This is useful for traditional and animated movies and games as well. To do this sophisticated wind and snow flake simulations were developed, and implemented. Compared to real snow, the simulation was very impressive, and the method developed was applicable in many scenarios.

An example of the results of the project. The snow accumulates realisticly, as it for example can be seen that the gap between the two leftmost houses has created a small pile of snow on the house to the right.